A week after Trump was banned from several social media platforms, election fraud misinformation online fell 73%, according to one source. Was it eye-opening? Yes, but is that good or bad?

January 6, 2021 was—to borrow a line from a famous speech by former president Franklin Roosevelt—a day which will live in infamy. Two days later, Twitter announced that it had permanently banned @realDonaldTrump’s Twitter account due to violations of its “Glorification of Violence” policy. Other social media companies were quick to follow suit. Within a matter of days, Facebook, Instagram, Reddit, Twitch, TikTok, Snapchat, Shopify and YouTube all either banned Trump’s accounts or (in YouTube’s case) heavily moderated enforcement of election misinformation and claims of voter fraud. Both Google and Apple removed far-right social network Parler from their respective app stores, and Amazon Web Services stopped providing cloud services for Parler. All claimed this was due to violation of their Acceptable Use policies or policies against promotion of violence.

A week later, online misinformation about election fraud plummeted 73 percent—from 2.5 million mentions to 688,000 mentions across several social media sites—according to research done by Zignal Labs, a San Francisco-based research analytics firm. While it’s tragic that it took an assault on the U.S. Capitol and the deaths of five people to get to this point, it’s also eye-opening that private Big Tech firms have this much power over the dissemination of political speech in this country.

This fact has not gone unnoticed by one of the biggest social media platforms on the Internet today. Nick Clegg, Facebook’s vice president for global affairs and communications, told NPR’s All Things Considered, “There has been, in my view, legitimate commentary, not only here in the U.S., but crucially from leaders around the world [about Trump’s suspension]. Many of those leaders and other commentators have said, look, they might agree with the steps we took, but they worry about what they view as unaccountable power of private companies making big decisions about political speech. And we agree with them.”

There has been, in my view, legitimate commentary, [from] [m]any of those leaders and other commentators [who] have said, look, they might agree with the steps we took, but they worry about what they view as unaccountable power of private companies making big decisions about political speech. And we agree with them.

Nick Clegg, Facebook’s vice-president for global affairs and communications

Twitter account CentristPolitics.net came at the topic from a different angle, posting a tweet on January 23, 2021 that read as follows:

Of the five replies that the tweet received, the most direct answer came from a Twitter user named Will Slack, who wrote:

We’ll get to the First Amendment issue in a minute, but it’s noteworthy that both Facebook’s Clegg and CentristPolitics.net came to the same conclusion: There ought to be a law governing this. As you can see from the above post, CentristPolitics.net asked this question as a way of introducing their blog post on the subject in which they claim that Direct-to-Citizen political speech is a new type of political speech, and as such, should be regulated by new laws.

Clegg, on the other hand, told NPR that governments should set “democratically agreed standards by which we could take these decisions,” rather than leaving the decision up to the social media companies themselves. However, creating such laws would take time. So what does Clegg think should be done in the meantime? “We have to take decisions in real time. We can’t duck them,” he told NPR.

Facebook may already be ahead of the game in that respect. Last year, the company created an Oversight Board to make these kinds of tough precedent-setting decisions. Think of it as the Facebook equivalent of the Supreme Court—It has 90 days to rule, its ruling is final, and Facebook must abide by its decision. Facebook has already asked its Oversight Board to decide whether Trump’s Facebook account should be reinstated or not now that he is no longer President. A decision is still pending.

No violation

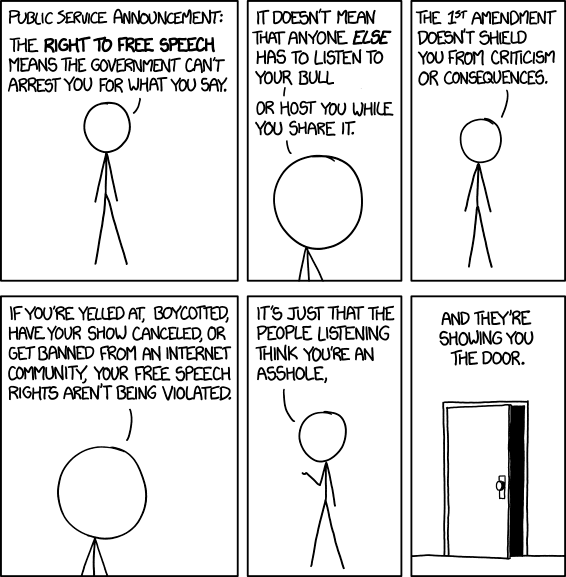

Regarding the First Amendment, it’s important to remember that this Amendment covers freedom of speech between a citizen and their government. Freedom of speech legislation does not extend to private companies, who are entitled to create their own rules for their own products and services. Thus, Slack was absolutely correct in claiming that the scenario suggested by CentristPolitics.net would not be a violation of the First Amendment.

This leaves us, the voters and citizens, between a rock and a hard place. Why? The people support it; even Facebook supports it, so it should be easy to pass legislation about this, right? Sadly, it doesn’t work that way.

If you know how broken our political system is, then you know that whether the public supports an issue or not, it still has only a 30% chance of becoming law. Also, getting Democrats and Republicans to agree on “democratically agreed standards” would be a Herculean task on its own! What we need to do is fix our broken political system first, so that legislation about issues like this—and every other issue you care about—is easier to pass in Congress. The first step to fixing this broken political system is to pass H.R. 1, the “For The People Act”. It’s not a cure-all to be sure, but it’s a great first step in the right direction. If you want to do your part to help, we would welcome your assistance.

Pingback: Volunteer Spotlight: Meet Gil Sery - Represent.Us